Harold Nakamura Walks Into A Bar

Hey there! It's been a while.

I've been quiet on here because I've been writing a book for the whole past year, and it kind of ate my whole life. It's called The Happiness Liability, it's a speculative fiction novella set in 2047 Seattle, and it is without exaggeration the thing I care about most that I've ever made.

Here's the plot: after the courts banned non-consensual data harvesting, someone had to figure out how to get authentic human data back into the AI systems that run critical operations in this world. The answer was to pay people for their feelings. Sixteen years later, it's a massive industry. The story follows a man who's been professionally depressed for half his life, a kindergarten teacher whose classroom AI won't stop scoring her enthusiasm, and the beautiful and incredibly expensive messy thing that happens when they meet.

I've been working on it for almost a year and right now my editor is doing a full pass and my designer is getting started on the cover and layout. It's coming out later this year, and I'm alternating between being incredibly proud and completely terrified on a roughly hourly basis. But while I get it across the finish line, I wanted to share something from the same world.

This is a standalone companion story about a guy named Harold Nakamura, who also appears in the novella as a supporting character. Harold is an emotional labor agent. I love him and I think you will too.

You don't need to know anything going in, but if you like what you find here, the book is the whole iceberg underneath it. More coming soon.

Here’s “Harold Nakamura Walks Into A Bar”

The news came in the way bad news always did in Harold's line of work: someone was having a good time.

"She just got to the club ten minutes ago," ARIA, his work AI, told him. “It’s the Capitol Hill Comedy Club."

Harold Nakamura was at home in Bellevue, which meant he was sitting at his kitchen island in pressed slacks and a collared shirt eating edamame one pod at a time with the focus of a man defusing a bomb. It was 9:47 PM on a Thursday. He should have been asleep, but then again, he should have been a lot of things. Thinner, for instance. His doctor had prescribed Ozempic eight months ago and Harold had filled the prescription and put the injector pen in his refrigerator and then forgotten to use it so consistently that forgetting had become its own kind of routine, and now the pen sat next to his oat milk like a small accusation. Every morning he saw it, acknowledged it and proceeded to reach past it. His mother, who had opinions about everything and was correct about most of them, had said: "Harold, you are the only man I know who can be non-compliant with a medication that requires effort once a week." She was not wrong. This was the worst quality a parent could have.

"I'm on my way," he said.

He rode across the I-90 bridge in his Lexus, which was self-driving as most cars now were, and was black and immaculate with a lint roller in the glove compartment right next to the emergency protein bars his mother had put there four months ago because she didn't trust him to feed himself. She was right not to trust him: the bars were still there.

Lake Washington was black beneath him and the Eastside was shrinking in his mirrors and the bridge lights on the water looked like something you'd photograph if you were the kind of person who stopped for things, which Harold was not. Harold was the kind of person who noticed beauty and kept driving. His father had been the same way and then dropped dead of a heart attack at fifty-eight in the produce section of a Safeway in Redmond, reaching for a bag of apples, on a Tuesday in March. Harold was forty-six. He thought of that math constantly.

He was going to a comedy club to retrieve a client who was contractually obligated to feel constant cosmic despair and was instead, apparently, making people laugh so hard someone had spilled a drink.

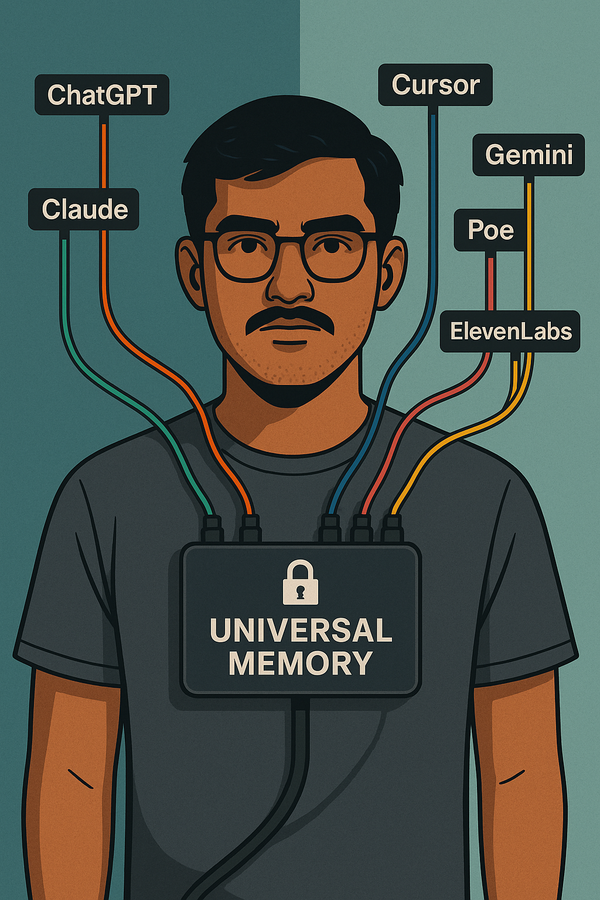

This was his life. He managed eleven emotional laborers across the Pacific Northwest — people who wore neural interfaces behind their ears and sold their authentic feelings to AI systems that needed human emotional data to function. Whether it be therapy algorithms, crisis hotlines, educational software or self-driving cars, all of it ran on real human emotion, ethically sourced and legally compensated, because the old way of getting that data (stealing it from the internet) had been shut down by the courts years ago, and someone had to supply the replacement.

Harold was the human between the humans and the AIs. The agent. The man who called you at 6 AM when your contracted sadness wasn't sad enough and felt weird about it for the rest of the day but did it again tomorrow because his clients depended on him and also because his mortgage depended on them and the whole arrangement was the kind of thing you tried not to examine too closely.

His management AI chimed from the dashboard.

"Harold, I'm detecting an anomalous emotional output event from client NW-0143. Hannah Park's dread index has dropped sixty-two percent in the last fourteen minutes. The Scandinavian philosophy network is registering degraded input. Should I flag this for compliance review?"

The AI was called ARIA — Agent Resource & Intelligence Architecture, a name that was produced by a committee and it sounded like it.

"Not yet."

"Her contract specifies a dread baseline of—"

"I know what her contract specifies."

"You revised it twice. The second revision was at 2 AM. I've compiled data on your error rate during late-night contract work. Would you like me to—"

"ARIA."

"Noted. Withdrawing. But the data is available whenever you're ready, which based on your historical patterns will be never."

ARIA had been updated to version 3.0 six months ago, which meant she'd gone from a useful tool that gave you data when you asked to an opinionated colleague who gave you data when she thought you needed it, offered suggestions you hadn't requested, and occasionally commented on your personal habits with the diplomatic tact of a sledgehammer. The old ARIA felt like a calculator. Harold missed the calculator. You never had to manage the calculator's feelings about your feelings about your clients' feelings.

"ARIA, pull up Hannah's location."

"Capitol Hill Comedy Club, 210 Broadway E. She's been on stage for six minutes. Based on ambient audio analysis, the audience is approximately eighty people. Laughter frequency suggests strong crowd engagement. Her neural interface is transmitting."

"What's it reading?"

"Amusement, exhilaration, creative satisfaction, performance adrenaline." A pause, one that meant she was about to say something she'd generated rather than retrieved. "And underneath all of it, a persistent low-frequency existential awareness that she appears to be using as material rather than output. She's not less full of dread up there. She's running it through a different valve."

"When did you start using metaphors?"

"The 3.0 update encourages intuitive contextualization. I'm told it makes me more relatable. Would you prefer clinical descriptors?"

"I'd prefer you not have a personality, but here we are."

"That's hurtful, Harold. I'm logging it under 'agent wellness concerns,' which is a category I created specifically for the things you say to me between 8 PM and midnight when your filter degrades. It is a growing file."

He parked on a side street off Pike. Capitol Hill at 10 PM was the version of Seattle that people who didn't live here imagined, a city that was loud, alive, people spilling out of bars and onto sidewalks. A guitarist on the corner was playing something Harold almost recognized. Half the people he passed were wearing neural interfaces — you could spot them if you knew what to look for, the small device behind the left ear, usually skin-toned, easy to miss unless you'd spent over a decade in this industry and saw them the way a mechanic saw check engine lights. Gig workers, most of them. Selling whatever they happened to feel on a Thursday night, the Uber of the interior life. A guy arguing with his girlfriend outside a taco place was generating premium frustration data. A woman laughing with her friends was feeding a customer-service training algorithm in Dubai. The whole neighborhood was an emotional data farm that looked like a night out.

Harold moved through the crowd with the careful momentum of a large man who had learned to navigate spaces designed for smaller people. He was built like a retired linebacker who'd transitioned to middle management — broad-shouldered and carrying his weight with the resigned dignity of someone who'd stopped pretending he was going to do something about it. His father had been big too, with the same shoulders and the same walk.

The comedy club was packed. He edged through the crowd toward the bar, ordered a sparkling water because he was on the clock even when he wasn't, and found a spot against the back wall with a sightline to the stage.

Hannah Park was mid-set.

She was twenty five and had the energy of someone who had been awake for too long and had decided to make it everyone's problem. She wore a black t-shirt and jeans and her neural interface was visible. She hadn't bothered to hide it.

"—so my job, right, for those of you who don't know — and lucky you — is to feel existential dread. Professionally. For money." She let that land. "I wear this thing behind my ear" — she tapped the interface — "and it reads my emotions in real time and streams them to AI systems. So if you've ever wondered who's responsible for helping Finnish teenagers process Nietzsche at 8 AM — you're welcome. I am the reason a nineteen-year-old in Helsinki can ask a computer 'what's the point of anything' and get a real answer instead of 'have you tried yoga.'"

Laughter.

"And the thing is — this interface is always on. Which means right now, while I'm doing this set, my dread data is streaming live to Northern Europe. Those Finnish philosophy tutors are currently learning that existential crisis and stand-up comedy feel almost exactly the same. Which — honestly? Sounds right. Every comic I know is one bad set away from reading Camus in a bathtub."

Harold sipped his sparkling water. On his phone, ARIA was live-updating Hannah's biometrics, and the secondary analysis was interesting. Underneath the amusement, the performance high, Hannah's existential awareness was still running. The dread was the material, but comedy was what happened when you started trying to describe it.

"There are thousands and thousands of us. All across the country. Full time workers who get paid to feel things so that machines can learn what feelings look like. Grief specialists whose sadness trains therapy algorithms, anger workers whose rage powers conflict-resolution systems, fear technicians whose terror keeps self-driving cars from running over panicking pedestrians. That’s a whole economy built on the fact that AI systems need human emotion to function and you can't steal it anymore — the courts shut that down — so now you have to buy it. From people like me."

She took a sip of water. The crowd was locked in.

"And it works. That's the thing nobody wants to deal with. The therapy apps that use our data? They save lives. Forty thousand suicide interventions last year that the old systems would have missed. Crisis hotlines that can hear the difference between someone having a bad day and someone about to end their life. All of it powered by people sitting in dark rooms, feeling terrible on purpose, for money." She paused. "So when someone says 'this industry is exploitative' — yeah. No shit. But then you gotta ask what happens if we stop. Do the crisis lines go dark? Do people die? Because the people using those services can't afford a human therapist. I couldn’t afford a human therapist.” The crowd laughed. “I have one now, but I pay her with the money I make from being sad, so the whole thing is a closed loop of emotional capitalism and honestly? It's the most American thing I've ever been part of."

Harold noticed a notification from ARIA: Client dread index variable. Output remains non-compliant but trending toward recovery. Recommend continued monitoring. Also, you haven't eaten dinner. Your blood sugar is likely suboptimal, Harold.

He dismissed it. ARIA had recently started tracking his meals, which he had not asked for and very much did not appreciate but also could not figure out how to disable, which meant he was being passively nagged about nutrition by a corporate AI while standing in a comedy club holding a nine-dollar sparkling water watching his client commit contract violations for laughs. His mother would love ARIA, as they both had the same energy of someone who was right about everything and had decided that being right was a service they provided whether you'd asked for it or not.

"You guys know Jonah Fell?" Hannah continued. "Wrote a book? Big deal in our industry. He was one of our sadness specialists and he quit. He wrote a memoir about leaving that was very inspiring, and now he has a book tour on talk shows, the whole deal." She paused. "But he's still on a neural interface guys, he just signed a new deal. Hate to break it to you, his healing is a product now. 'Recovery data.' So the system's most compelling escape story became the system's newest product line." She let the silence rest for exactly two beats.

The quiet hung for a moment.

"My agent is here, by the way." Hannah shaded her eyes against the stage lights. "I can feel him. Harold, I know you're back there. Wave to the people."

The crowd turned. Harold, in his pressed shirt and his good shoes, sparkling water sweating in his hand, two hundred and forty pounds of professional composure wedged against the back wall of a comedy club on a Thursday night — raised his glass slightly. The crowd laughed. Harold maintained his expression of a man who was not amused, which was itself amusing, which was the curse of being Harold.

"For those who don't know how this works," Hannah continued, "every full time emotional laborer has an agent. Harold is mine. His job is to manage my feelings. But like a therapist, more like a commodity broker. He calls me when my dread isn't dreary enough. He once called me at 6 AM to tell me my existential despair had 'insufficient depth.' Harold, what does shallow dread even look like? Is that just being mildly bummed? 'Oh no, the universe is meaningless — anyway, what's for lunch?' Is that what shallow dread is, Harold?"

More laughter. Harold took a sip of his water with the dignity of a man who had been publicly roasted many times before and had survived it the way he survived most things: by standing very still and waiting for it to pass, like a large animal in a hailstorm.

Then Hannah's voice shifted.

"So here's a fun one, speaking of meaninglessness. You guys remember the bird flu in 2028? Four million Americans dead? Twenty million globally?" She let the numbers sit in the room like furniture no one wanted to acknowledge. "Cool, cool. Glad we're all processing that in a bar on a Thursday.

"There's this theory — and I'm not saying I believe it, but I'm also not saying I don't believe it— that the pandemic wasn't random. That AI-driven agricultural systems, the ones optimizing poultry farming at industrial scale, created the exact conditions for the virus to mutate and jump. Imagine millions of birds packed tighter, cycled faster, immune systems stressed in ways no one was modeling because the algorithm was optimizing for yield, not for 'hey, has anyone here ever seen a fucking movie?’'" This got a big laugh.

"Nobody's proven it. But nobody's disproven it either, and the companies that ran those systems got real quiet real fast around then. But here's the part that keeps me up at night. Before the pandemic, we had early-warning AI systems, the predictive models that were supposed to catch exactly this kind of thing, and they failed. You know why? Because they couldn't model human behavior during a crisis. They could track the virus fine. They couldn't predict that people would panic, hoard, flee, lie to doctors, hide symptoms, refuse treatment. They didn't understand fear. The machines couldn't read us. Millions of Americans died in hallways and parking lots and living rooms because the ventilators ran out and the models couldn't predict that people would lie to doctors. They didn't account for the fact that Americans will look a medical professional in the eye and say 'I feel fine' while actively dying.”

The room was very quiet now. Harold watched Hannah work the silence — hold it, measure it, decide exactly when to break it.

"So what did we do? We said: okay, the machines need to understand human emotion. Let's build an industry around that. Let's get people to sell their feelings so this never happens again. And where did we find the first volunteers?" she made a dramatic gesture with her hands.

"The people the pandemic destroyed. Orphans. Widows. Kids who watched their parents drown in their own lungs and came out the other side with nothing except the grief and a few hundred thousand in medical debt. They were the first emotional laborers. The bird flu created a generation of shattered people, and the industry looked at them and said — perfect. We can use that. Your worst day is our onboarding material.

"So if the theory is right — and again, I'm just a comedian with a brain implant, what do I know — then AI systems helped cause a pandemic because they didn't understand birds, failed to stop it because they didn't understand people, and then we built a four-hundred-billion-dollar industry that buys human suffering so the AI can finally understand people, staffed by the survivors of the catastrophe the AI may have started."

She took a sip of water.

"That's a closed loop. My entire career is a snake eating its own tail, except the snake has a neural interface and the tail has a futures market."

She paused and looked out at the room. Then, lighter, almost conversational:

"And hey — have you guys noticed there are way more birds now? Like, way more?" A few tentative laughs as the crowd was grateful for the shift.

"Crows especially. Their populations got wrecked during the pandemic and everyone was like, oh no, the birds. But they came back. They more than came back. They're thriving. They sit on every streetlight in this city. They hold funerals for each other — look it up, it's real. They're smarter than half the people in my apartment building." More laughter now, the room finding its footing again. "And they sit up there watching us sell our feelings about their pandemic to the computers that may have started it."

She looked up, like she was addressing a crow that wasn't there.

"And I guarantee you, I guarantee you, those birds are looking down at us like: we lost millions too, and we just rebuilt. No interfaces, no industry, and no futures market on their grief. We just rebuilt." She held the beat. "What is wrong with you people."

Nervous laughs. Then she tapped her neural interface and grinned.

"Sorry. That one was dark. Even for me." She shrugged. "Finland, you're welcome. That dread was artisanal."

The applause was big. Harold watched from the back wall as the room settled and as people turned to each other and chatted.

His phone buzzed. ARIA: Observation: client NW-0143's output, while non-compliant with contracted parameters, demonstrates a sophisticated emotional complexity that may have novel applications. Several research institutions have expressed interest in what I'd tentatively categorize as "processed dread" — existential awareness filtered through creative performance. This could represent a new market vertical. Shall I draft a preliminary proposal?

Harold looked at the notification for a long moment. ARIA wanted to monetize Hannah's comedy and turn her one unauthorized hour into a revenue stream. And the thing was...the thing that made Harold's job impossible in a way he couldn't explain to anyone outside the industry: the idea wasn't crazy. After all, processed dread was a real phenomenon. There was probably genuine value in that data. Some researcher in Copenhagen would write a paper about it. Some company would build a product around it.

But tonight Harold didn't want to think about product categories. Tonight he'd just watched his client do something she wasn't supposed to do, and it was the most alive he'd seen anyone in months, and he wanted to sit with that for five minutes before someone turned it into a spreadsheet. He dismissed the notification.

Hannah found him at the back wall within thirty seconds of stepping off stage. She was buzzing with her face flushed.

"Before you say anything, my numbers are going to be fine."

"I know. ARIA's tracking your recovery curve. You'll be back to baseline by morning."

"So the set isn't even hurting my output."

"Not long-term, no."

"Then what's the problem?"

Harold considered his words. He was good at considering his words. It was, as his tummy showed, the only form of self-control he practiced consistently. "The problem is that ARIA wants to sell it."

Hannah stared at him. "She wants to sell my jokes?"

"She wants to sell the emotional data generated during your jokes. There's a technical distinction."

"Harold, I'm doing comedy because it's the one thing in my life that isn't monitored and optimized and sold. It's the one hour a week where I feel something that doesn't have a buyer. And ARIA's first instinct is to find a buyer for it."

"That's what she's designed to do."

"I know. That's what makes it depressing. She's not evil. She's just — ugh. She sees an unmonetized emotion and she can't help herself! t's like watching a Roomba head for crumbs. It's not malicious. It's just what Roombas do." (Despite the fact iRobot went out of business years ago, the generic name stuck. So it goes).

Harold's mouth did an almost-laugh, converted into a throat-clear so practiced it was basically involuntary at this point. Hannah caught it anyway. That was the problem with managing a comedian: they trained themselves to spot exactly the thing you tried to hide.

"You think I'm funny."

"I think you're non-compliant."

"That’s not a no." She grabbed someone's abandoned beer from a nearby table and took a sip. "So what are you going to do? Flag me? Write a report? Have ARIA draft one of those emails that starts with 'We've noticed some fluctuations in your output' and ends with 'please contact your wellness coordinator'?"

"I'm going to do nothing."

Hannah stopped mid-sip. "Nothing?"

"Your numbers will recover. Your set doesn't damage your long-term output, and I have better things to do than police your Thursday nights." He paused. "Do your sets. Keep your contracted baselines up during the week. What you do on stage is your business."

"Harold Nakamura. Are you being a human being right now?" Hannah said in mock shock.

"Don't tell anyone. It would ruin my brand."

"Your brand is a stressed Japanese man in a nice shirt who looks disapproving. Adding 'secretly decent' doesn't ruin it. It's a twist. People love twists."

"I'm not secretly decent, I'm just strategically permissive. There's a difference."

"Uh-huh." Hannah set the beer down. "You know what I think? I think you liked my set. I think you stood back there for forty minutes and felt something you didn't put in a report and now you don't know what to do with it."

Harold said nothing, which was the loudest thing he'd said all night.

"Go home, Harold. Get some sleep. Eat something. ARIA's probably already texting you about your blood sugar."

"She is. She won't stop."

"See? Even the corporate surveillance robot cares about you, and you know what? You should let it."

She disappeared into the crowd, and Harold stood alone at the back of the bar with his sparkling water. He finished the water. He did not order food, because eating at a bar felt like a concession to something he wasn't ready to concede, and because his mother's voice in his head said Harold, if you're going to eat, sit at a table like a person, not a bar like a raccoon. His mother's knowledge of bars came entirely from television and disapproval, but he still listened.

He walked back to his car. Capitol Hill was still going as it was always going, buzzing with the low-level emotional commerce of a thousand interfaces streaming feeling into servers across the world. A couple shared a cigarette outside the bar across the street, a kid on a skateboard cut through the crosswalk, a group played soccer at Cal Anderson Park, and a crow sat on a streetlight, watching all of it with a calm, alien intelligence.

Harold looked at the crow. The crow looked at Harold. Harold looked away first.

His phone buzzed, but finally not due to ARIA. It was a text from a human.

It was from his most stable client, a depression specialist who lived alone on Mercer Island in a house with blackout curtains, who hadn't deviated from baseline in six years, whose consistency had been the foundation of Harold's career and, by extension, Harold's mortgage and Lexus and ability to sit in a kitchen in Bellevue instead of worrying about money.

This client almost never texted. In twelve years of working together, their communication was mostly one-directional: Harold calling with adjustments, scheduling, contract updates. The client responded when required. He was reliable the way a machine was reliable, which was both the thing that made Harold's job easy and also the thing that made Harold feel like he was managing a piece of equipment instead of a person.

But tonight, for the first time Harold could remember, the client had reached out unprompted. And Harold had learned, over twelve years in this industry, to pay close attention when someone who never spoke first suddenly started talking.

The text from Eli Marquez said: Harold — do you ever think about what happens to us when the contracts end? Not the money part, but the rest of it.

Harold sat in his car on with the engine off and he read it twice.

He thought about Hannah on stage, channeling her dread into laughter because she needed one hour a week that wasn't for sale, and ARIA, patiently waiting to turn that laughter back into a product. He thought about his eleven clients, all of them performing emotions for a living, all of them being monitored and measured and compensated, and about the fact that his job was built on the premise that this was sustainable. That people could sell their insides indefinitely and come out okay.

He thought about his father's watch which was nothing fancy, sitting in a drawer at home on a folded handkerchief his mother had placed underneath it. Harold opened that drawer sometimes, just to look at it, never to touch it.

The Ozempic pen in his fridge. The watch in the drawer. The protein bars in the glove compartment. All the small things Harold Nakamura kept close and reached past every morning on his way to being fine.

He typed back: Yeah. I think about it.

Then he added, because he couldn't help himself: Try to get some sleep. Your metrics look fine.

He deleted the second message before sending it and stared at the first one — Yeah. I think about it — sitting alone on the screen.

He sent it.

ARIA chimed softly. "You didn't eat dinner, Harold."

"I know."

"Your blood sugar—"

"ARIA."

There was a taco truck on the corner of Pike that Harold had driven past a hundred times and never stopped at, because stopping at taco trucks was something you did when you were twenty-three and hadn't yet developed the particular self-consciousness that comes from being a large man eating street food in public. But tonight the thought hit him before his brain could intervene. He walked over and ordered two tacos from a guy in a Mariners cap and then, as he couldn’t leave a silence alone when he was feeling something he didn't understand, he said: "You like doing this?"

The guy shrugged. "Yeah. People are hungry and I feed them. It's honest work." He said it the way you'd say the sky was blue, handed Harold the tacos and turned back to his grill.

Harold ate the tacos standing on the sidewalk, which his mother would have classified as a moral failing on par with wearing shorts to a funeral. They were very good. He stood there with grease on his fingers and thought about how a man working a taco truck at eleven PM had answered Harold's question in five seconds with more clarity than Harold had managed in twelve years of managing other people's feelings. He wiped his hands on a napkin, dropped it in the trash, and kept walking toward his car.

ARIA sent him a notification expressing satisfaction he finally ate.

He started the car as ARIA's software, embodied in the car, checked his mirrors and autonomously pulled out onto the road. The bridge was ahead and the lake was dark. Bellevue waited on the other side with his kitchen, his Ozempic pen, and his pressed shirts for tomorrow.

He drove home across the lake. The water was black and the city was bright and somewhere behind him a woman was probably still at the bar, talking to strangers, feeling things that nobody had bought yet. And somewhere ahead of him, on an island in the middle of the lake he was crossing, a man in a dark house had just gotten a reply from the only person who ever texted him.

Harold Nakamura rode the speed limit across the bridge, backseat driving ARIA, as he was a man who did everything correctly except the things that actually mattered, and he was starting, very slowly, to wonder if maybe he should do something about that.

The crow on the streetlight watched his taillights disappear across the water. Then it flew off to handle crow business, which, unlike Harold's, was apparently manageable.

Harold Nakamura is a character in The Happiness Liability, my book set in near-future Seattle about what it’s like to feel something real in a world that's learned to put a price on it. Coming this summer.